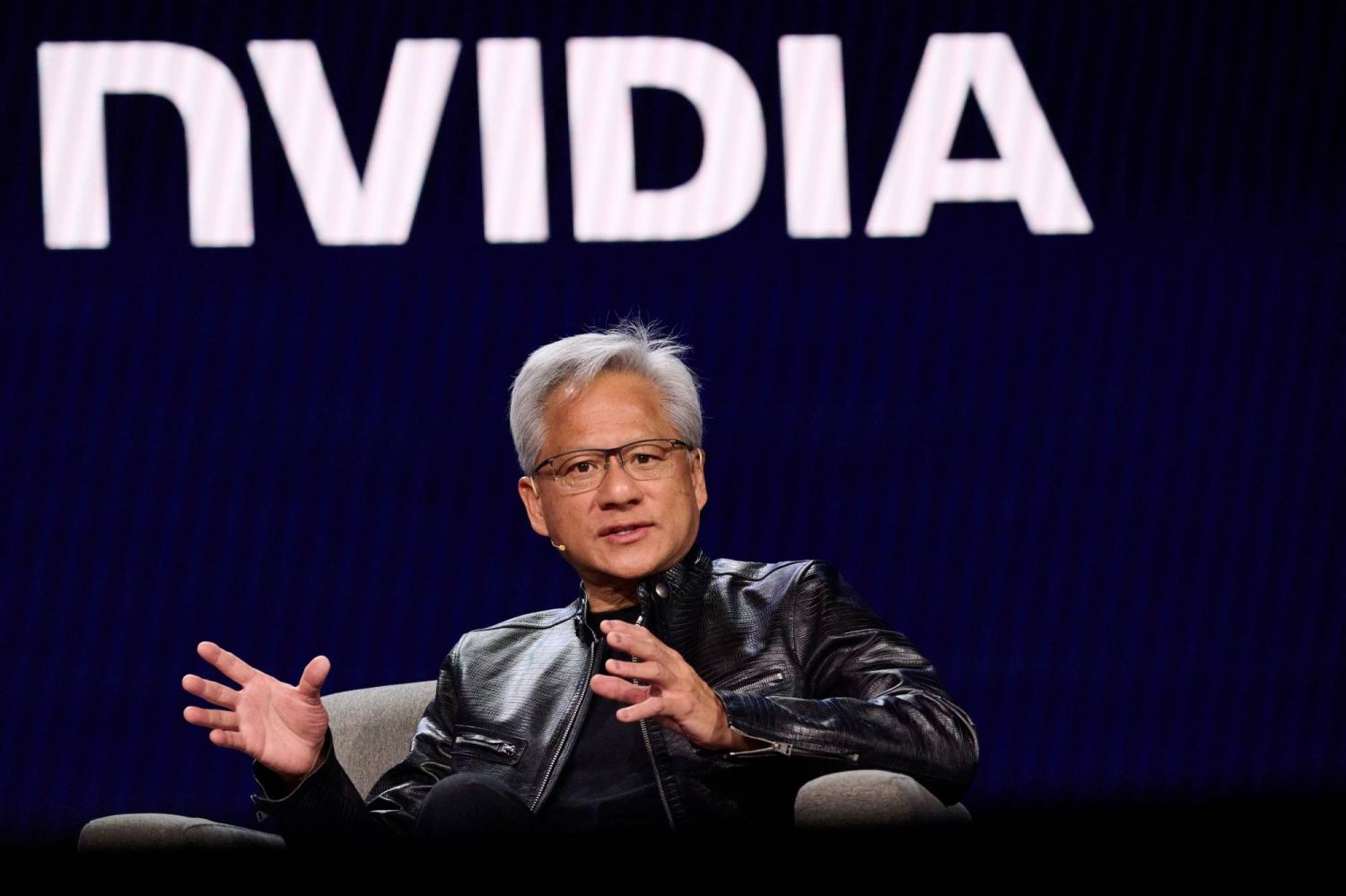

Jensen Huang, chief executive officer of Nvidia Corp., speaks during the 2026 CES event in Las Vegas, Nevada, US, on Tuesday, Jan. 6, 2026. Siemens and Nvidia announced an expansion of their strategic partnership to develop industrial and physical AI solutions to bring AI-driven innovation to industrial workflow. Photographer: Bridget Bennett/Bloomberg

ensen Huang does not do understated. At Nvidia’s annual GTC conference in San Jose on Tuesday, the CEO who turned a gaming chip company into the world’s most valuable business stood in front of a room full of developers and said, with a straight face, that Nvidia will generate more than $1 trillion in revenue from AI chips by 2027.

Then, almost as a footnote, he mentioned that Nvidia is restarting production of its H200 chips for China. After a 10-month freeze. Just like that.

Both of those things deserve attention. One of them is getting it.

The China Situation, Explained

For the better part of a year, Nvidia’s relationship with China was a mess.

The US government kept tightening export controls. Beijing kept pushing domestic companies toward Chinese-made alternatives.

Nvidia’s revenue from China, which once accounted for more than 10% of total sales, had effectively dried up.

As recently as late February, Nvidia disclosed in earnings materials that despite receiving approval to ship “small amounts” of H200 chips to China, it had not generated a single dollar in revenue from those sales.

That changed at GTC. Huang confirmed Nvidia now has licenses for “many customers in China” for H200 sales, including Tencent, Alibaba, ByteDance, and DeepSeek.

Purchase orders are in. Manufacturing is restarting. “Our supply chain is getting fired up,” he told reporters.

The deal structure is unusual: the US government takes a 25% cut of H200 revenue from China sales, which is either a creative form of industrial policy or a toll booth on Nvidia’s own business, depending on your perspective.

But the H200 is last generation. Nvidia’s current flagship, the Blackwell architecture, remains banned from China. And Beijing’s regulators have been glacially slow to approve imports even of the chips that are theoretically allowed.

So Huang is also preparing a second move: a version of Nvidia’s Groq inference chip, specifically designed to comply with Chinese market restrictions, expected to land by May.

Groq chips handle inference, the day-to-day task of running AI models, rather than training.

It’s the market where Nvidia faces the most domestic Chinese competition, which makes entering it there a statement as much as a business decision.

The $1 Trillion Number

Now for the bigger headline. Huang’s forecast that Nvidia will hit $1 trillion in AI data center revenue by 2027 is, depending on who you ask, either a bold but defensible projection or the most confident thing anyone has said in public this year.

Nvidia’s data center revenue in fiscal year 2025 was $115 billion. It’s growing at roughly 93% year-over-year.

The global AI infrastructure buildout, $700 billion being poured into data centers across the US alone, shows no sign of slowing.

Microsoft, Meta, Google, Amazon, and xAI are all spending more on compute in 2026 than they did in 2025, and Nvidia’s chips are in essentially all of it.

The caveat nobody wants to say loudly: $1 trillion in two years from a base of $115 billion requires either the entire global economy to keep pouring money into AI infrastructure at an accelerating rate, or China to come back online as a major revenue source, or both.

Investors seemed uncertain which way to lean, Nvidia’s stock dipped slightly after the conference despite the headline numbers, which is the market’s way of saying “we’ll believe it when we see it.”

The Thing Huang Said That Nobody Picked Up

Buried near the end of the GTC press conference, Huang pushed back on the wave of AI safety warnings that have been circulating, including from Anthropic, which recently drew red lines around autonomous AI warfare and mass surveillance.

Huang’s response was pointed: “Scaring everybody about a science fiction version of AI is a little bit too arrogant.” He said humility was needed, that AI needs to go more places not fewer, and that the industry should “continuously learn” rather than issue warnings.

It was a direct shot at the labs drawing guardrails around the technology Nvidia’s chips are powering.

Huang’s implicit argument: the chip seller doesn’t want the application constrained. Which is a coherent business position. Whether it’s a coherent safety position is a different conversation, one Nvidia has a financial interest in not having.

What This Week Actually Means

GTC 2026 confirmed a few things at once. China is back on the table for Nvidia, partially, conditionally, expensively, but back.

The inference market is the next battleground, and Nvidia is moving to dominate it the same way it dominated training. And Jensen Huang is still the most confident person in any room he walks into, which in this industry is either a superpower or a liability, and so far has consistently turned out to be the former.

One trillion dollars by 2027. Write it down. Check back in eighteen months.