Right now, thousands of Nvidia Blackwell GPUs are being installed in data centers around the world.

Microsoft. Google. Meta. Amazon. CoreWeave. Companies are spending hundreds of billions of dollars deploying the chip that Nvidia spent two years telling everyone was the future of AI.

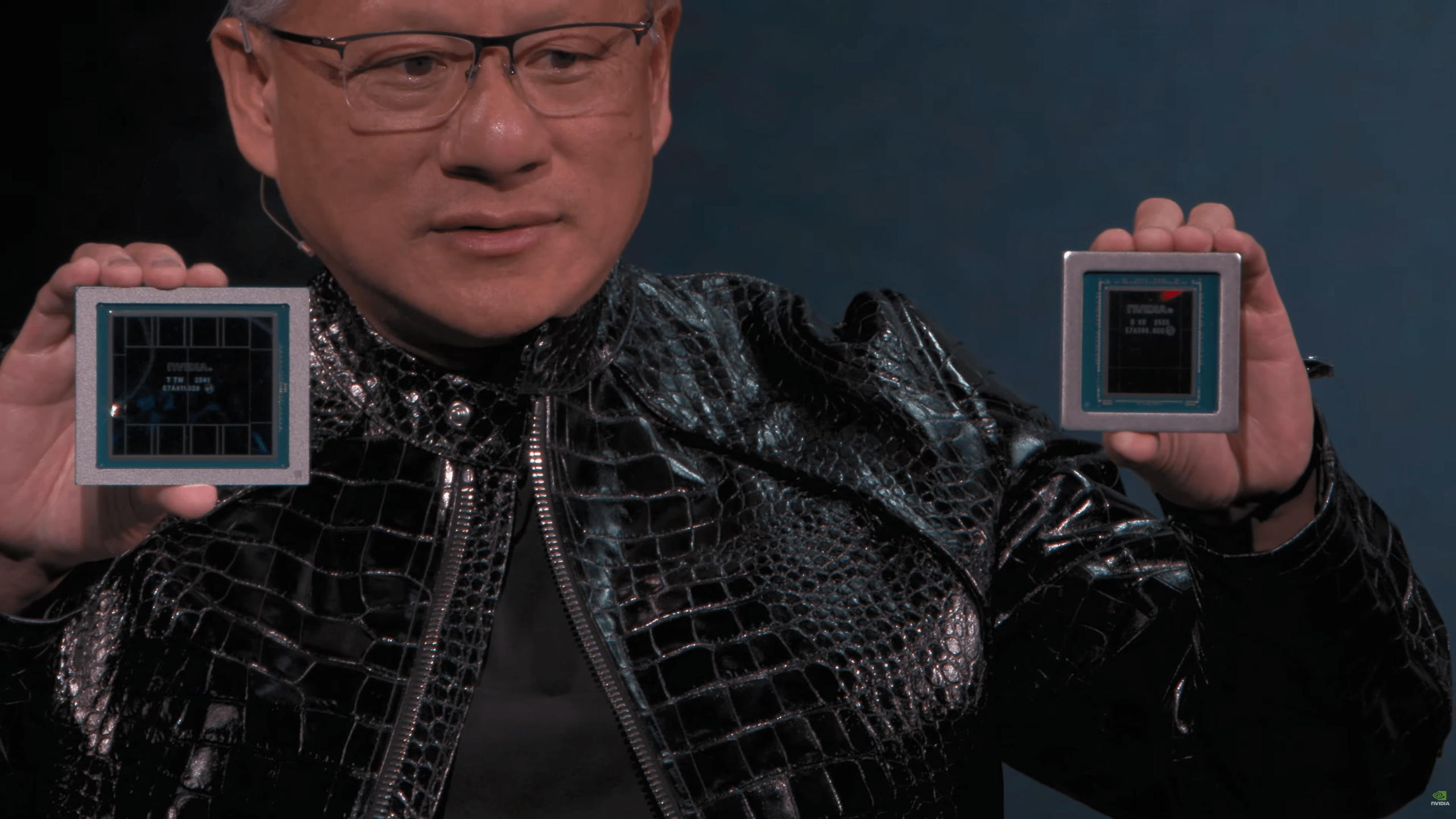

On March 16, Nvidia announced what comes next. And what comes next makes Blackwell look like a warmup act.

The Vera Rubin platform, unveiled in full at Nvidia’s GTC conference in San Jose, is seven chips in full production, five rack configurations, and a complete rethinking of what an AI factory looks like.

It delivers 5x more inference performance and 3.5x more training performance than Blackwell.

It costs one-tenth as much to generate a token.

And it ships in the second half of this year.

Seven Chips, One System

Nvidia built Blackwell around a single flagship GPU. Vera Rubin is different in kind, not just degree.

The platform comprises seven co-designed chips, each handling a specific phase of the AI pipeline.

The Rubin GPU handles training and compute-heavy inference. The Vera CPU, with 88 cores and 1.5 terabytes of LPDDR5X memory, manages data processing at a speed twice that of its predecessor.

The newly added Groq 3 LPU, acquired through Nvidia’s $20 billion Groq purchase in December, handles the low-latency decode phase of inference, generating output tokens with 35 times higher throughput per megawatt than current alternatives.

Three networking and storage chips, the NVLink 6 Switch, ConnectX-9 SuperNIC, and BlueField-4 DPU, connect everything into a single coherent system.

The flagship configuration is the Vera Rubin NVL72 rack. Seventy-two Rubin GPUs and 36 Vera CPUs, connected via NVLink 6, operating as a single accelerator.

It delivers 3.6 exaflops of inference compute. It carries 20.7 terabytes of HBM4 memory with 1.6 petabytes per second of bandwidth.

The entire rack is fanless, tubeless, and cableless, cooled entirely with liquid. Installation time: five minutes, down from two hours for Blackwell.

Scale multiple NVL72 racks into a 40-rack POD and you get 60 exaflops of compute. Jensen Huang called it “a generational leap.” He was not being modest, but he was also not wrong.

What “10x Lower Token Cost” Actually Means

The most consequential number in the entire announcement is not the performance figure. It is the cost figure.

Generating AI responses, whether that is a ChatGPT reply, a Claude analysis, a Gemini search result, or any other AI output, requires processing tokens.

Tokens are the basic unit of AI computation. The cost of running AI at scale is essentially the cost of generating tokens multiplied by the volume of tokens needed.

Vera Rubin cuts the cost per token by a factor of ten compared to Blackwell.

That single number has enormous implications. AI applications that were marginally economical on Blackwell become cheaply viable on Rubin.

AI applications that were not economical at all on Blackwell become worth building on Rubin. The entire cost structure of deploying AI at scale drops by an order of magnitude.

That is not just a hardware upgrade. That is a market expansion. Every business that looked at the economics of AI inference and decided it was too expensive to deploy at scale now needs to redo that calculation.

The Problem for Everyone Who Just Bought Blackwell

The upgrade cycle in AI hardware has been relentless since 2022, but Vera Rubin is a particularly sharp turn.

Blackwell deployments at major cloud providers only began in earnest in late 2025 and early 2026. Many of the largest Blackwell orders are still being fulfilled. Companies spent years on waiting lists for Blackwell GPUs. Some are still waiting.

Now Nvidia has announced that Rubin ships this year, with 5x better inference performance at one-tenth the token cost.

The companies most exposed are the ones who locked in the largest Blackwell commitments at the highest prices. Microsoft, Meta, Google, Amazon, they all moved fast on Blackwell because they had to.

Now they face a choice: run Blackwell through its depreciation cycle while competitors deploy Rubin, or accelerate the transition and absorb the cost of a shorter-than-expected Blackwell lifespan.

Neither option is comfortable. Both will be chosen by different companies depending on their competitive pressure and their CFO’s appetite for write-downs.

The Groq Acquisition Just Paid Off

One of the most interesting moves inside Vera Rubin is the Groq 3 LPU.

Groq was an AI chip startup founded by former Google engineers who had worked on the original Google TPU.

Its Language Processing Unit architecture was specifically designed for fast, deterministic inference, generating tokens quickly at low latency, which is exactly the bottleneck that limits real-time AI applications.

Nvidia bought Groq in December for $20 billion. At the time, analysts questioned whether the price was justified.

Five months later, the Groq 3 LPU is integrated as a core component of Vera Rubin, delivering 35x higher inference throughput per megawatt and 128GB of on-chip SRAM for handling trillion-parameter models with million-token context windows.

That is the piece that makes Vera Rubin particularly compelling for agentic AI, the kind of multi-step AI systems that are increasingly powering enterprise applications.

Agents need fast, cheap token generation to run complex reasoning chains in real time. The Groq 3 delivers exactly that.

The $20 billion does not look excessive anymore.

Who Is Getting It First

AWS, Google Cloud, Microsoft Azure, and Oracle Cloud are all confirmed as early Vera Rubin deployers in the second half of 2026.

CoreWeave, Lambda, Nebius, and Nscale are next in line among cloud providers. Dell, HPE, Lenovo, and Supermicro will build Rubin-based servers for enterprise customers.

Microsoft’s Fairwater AI superfactories, the massive next-generation data centers it has been building across the US, will run Vera Rubin NVL72 rack-scale systems.

Elon Musk, whose xAI Colossus cluster was built on Blackwell with Supermicro, posted simply: “Nvidia Rubin will be a rocket engine for AI. If you want to train and deploy frontier models at scale, this is the infrastructure you use.”

That endorsement from the operator of the world’s largest single AI cluster carries more weight than most press quotes.

What AMD Is Doing About All This

AMD is not sitting still. Its Helios rack-scale system, featuring the MI430X, MI440X, and MI455X accelerators, is targeting the same market with a direct NVLink 72 competitive response. AMD says Helios is on track for the second half of 2026.

AMD’s problem is not the hardware. Its MI300X performed credibly against Blackwell on several workloads. Its problem is software.

Nvidia’s CUDA ecosystem, twelve years in the making, is the default programming environment for virtually every AI developer on earth.

Switching from CUDA to AMD’s ROCm platform requires significant engineering work that most companies have not prioritized when Nvidia chips were readily available.

Vera Rubin deepens that moat. More Nvidia chips in the field means more CUDA code written, more CUDA developers hired, more CUDA optimizations built into every major AI framework.

Every Rubin deployment makes AMD’s already-difficult software catch-up problem harder.

The Bigger Picture

Jensen Huang said at GTC that roughly $10 trillion of computing infrastructure built over the last decade is now being modernized to accelerated computing. That is the market Vera Rubin is being aimed at.

Nvidia’s annual chip cadence, once considered aggressive, has now become the expected rhythm of the AI industry. Blackwell in 2025. Rubin in 2026. Rubin Ultra likely in 2027.

Each generation resets the economics of AI deployment and extends Nvidia’s lead over anyone trying to catch up.

The companies building on today’s best hardware are, by design, building on tomorrow’s second-best. That is not a flaw in the strategy. It is the strategy.

And for now, at least, Nvidia is the only company shipping at the pace that strategy requires.